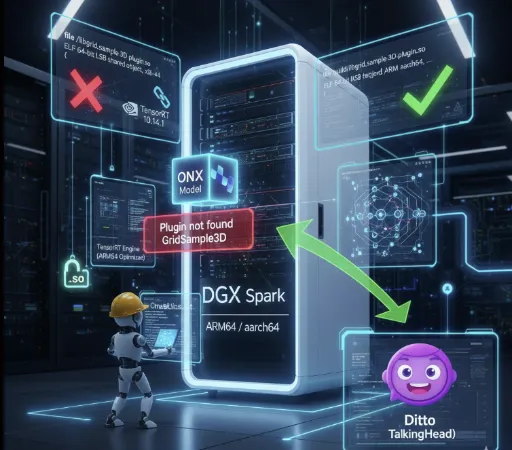

Porting Ditto TalkingHead (DGX Spark / ARM64) to TensorRT – A Detailed Log

Purpose

To run the ditto-talkinghead project on a DGX Spark (ARM64 / aarch64) system, I converted the existing ONNX models into TensorRT engines that work in this environment and verified inference.

Because warp_network.onnx depends on a custom GridSample3D plugin, the core challenge was loading a TensorRT custom plugin (.so) built for the target architecture.

Why This Was Necessary

The Ditto checkpoint includes libgrid_sample_3d_plugin.so, but the binary is compiled for x86‑64.

My setup:

- DGX Spark

- ARM64 (aarch64)

- TensorRT 10.14.1

- CUDA 13.1

Since an x86 plugin cannot be loaded by an ARM TensorRT runtime, the parser failed on warp_network.onnx and the TRT engine could not be created.

Symptoms (Key Failure Log)

During TRT conversion, warp_network produced the following errors:

Unable to load library: libgrid_sample_3d_plugin.soPlugin not found ... GridSample3DFail parsing warp_network.onnx- Final error:

Network must have at least one output

The root cause was the failure to load the GridSample3D plugin.

Root‑Cause Analysis

1) Verify plugin file existence

The file was indeed present at:

./checkpoints/ditto_onnx/libgrid_sample_3d_plugin.so

So it wasn’t a simple path issue.

2) Check with ldd

Running ldd ./checkpoints/ditto_onnx/libgrid_sample_3d_plugin.so returned:

not a dynamic executable

This hinted at an architecture mismatch.

3) Confirm architecture with file / readelf

The decisive evidence:

fileoutput:ELF 64-bit LSB shared object, x86-64readelf -houtput:Machine: Advanced Micro Devices X86-64

The supplied .so is an x86‑64 binary, which cannot be loaded on ARM64.

Solution (Key Insight)

Conclusion

The GridSample3D TensorRT plugin must be rebuilt for ARM64.

Step‑by‑Step Procedure

1) Obtain plugin source

The ditto-talkinghead repository only ships the binary, so I fetched the source from a separate repo:

grid-sample3d-trt-plugin

Key source files:

grid_sample_3d_plugin.cppgrid_sample_3d_plugin.hgrid_sample_3d.cugrid_sample_3d.cuh

2) Verify TensorRT / CUDA environment

The environment details:

- TensorRT Python:

10.14.1.48 - TensorRT library:

/usr/lib/aarch64-linux-gnu/libnvinfer.so - CUDA:

13.1 - GPU capability:

(12, 1)→ CUDA arch121

3) Adjust CMake build settings

The original CMake file hard‑coded x86‑centric GPU architectures and caused several issues:

- Forced

compute_70(unsupported by the current nvcc) - Missing include path for

cuda_fp16.h - No explicit TensorRT lib directory

Fixes applied

- Removed hard‑coded

CUDA_ARCHITECTURES - Added TensorRT include and lib paths

- Specified CUDA include path (

/usr/local/cuda/targets/sbsa-linux/include) - Disabled optional test subdirectory build

4) Build the ARM64 plugin successfully

cd /workspace/grid-sample3d-trt-plugin

rm -rf build

mkdir build && cd build

cmake .. \

-DCMAKE_BUILD_TYPE=Release \

-DTensorRT_ROOT=/usr \

-DTensorRT_INCLUDE_DIR=/usr/include/aarch64-linux-gnu \

-DTensorRT_LIB_DIR=/usr/lib/aarch64-linux-gnu \

-DCMAKE_CUDA_ARCHITECTURES=121

cmake --build . -j"$(nproc)"

The build produced:

build/libgrid_sample_3d_plugin.so

Confirm the binary is ARM64:

file build/libgrid_sample_3d_plugin.so

Expected output example:

ELF 64-bit LSB shared object, ARM aarch64, …

5) Replace the checkpoint’s x86 plugin

cp /workspace/grid-sample3d-trt-plugin/build/libgrid_sample_3d_plugin.so \

/workspace/ditto-talkinghead/checkpoints/ditto_onnx/libgrid_sample_3d_plugin.so

6) Verify TensorRT plugin loading

Loaded the rebuilt plugin directly through the TensorRT Python plugin registry and confirmed it worked.

7) Rerun ONNX → TensorRT conversion

Running cvt_onnx_to_trt.py now succeeded for the entire model, including warp_network.onnx.

Inference also succeeded.

Current Status

- ✅

GridSample3Dcustom TensorRT plugin runs on ARM64 - ✅

warp_network.onnxparses correctly - ✅ ONNX → TensorRT engine conversion succeeds

- ✅ Ditto TalkingHead inference works on DGX Spark / ARM64

In short, the project is fully ported to the DGX Spark platform.

Troubleshooting Notes

1) Checkpoint‑bundled .so files are platform‑specific

A checkpoint may contain binaries compiled for a different ISA. If you’re on an aarch64 machine, suspect an x86 binary first.

2) Plugin rebuild may be required after TensorRT upgrades

When moving to a new major TensorRT version, API/ABI changes can break existing plugins.

3) Avoid hard‑coding CUDA architectures in CMakeLists.txt

Values like 70;80;86;89 will fail on newer GPUs. Use the actual device capability, e.g., (12,1) → 121.

python - <<'PY'

import torch

print(torch.cuda.get_device_capability())

PY

Environment Summary

- Platform: DGX Spark

- Architecture: aarch64 (ARM64)

- CUDA: 13.1

- TensorRT: 10.14.1

- Python: 3.12

- GPU capability: 12.1 (CMake CUDA arch = 121)

Related posts